Parsing, Indexing, and Self-Hosting a Library of 10,000 GLP-1 Studies on a 6-Year-Old PC with SQLite, Docling, and a Little Bit of Elbow Grease

I recently wiped my 6-year-old gaming PC to create a home server for myself. So, this weekend, I found myself with a completely fresh server, around 1.8 terabytes of free storage, and in need of an excuse to play with a couple of fun local-first AI tools. I've been interested in the intersection of AI, information retrieval, and academic articles for quite some time now. And, over Christmas, I built a small open-source local MCP called LitLake, which builds a local full-text index of users' Zotero libraries and exposes access via various MCP tools. While I continue to fall behind reported issues and pull requests, it's been a great introduction to the wonders and woes of on-device document processing.

If you're reading this from LitLake, I'm sorry I haven't caught up yet! I appreciate all your help, debugging, and suggestions.

My fresh server and newly available hard drive space seemed like a great excuse to see if I could scale some of the processing workflows I explored with my first release of LitLake to a more meaningful level. My goal is to create a massive medical literature knowledge base for GLP-1 drugs that is locally hosted and supports semantic search.

In this blog, we'll cover the technical aspects of efficiently screening millions of abstracts for topic relevance, sourcing thousands of full-text PDFs, and then locally processing them into a file-based RAG system.

So without further ado, let's jump in!

Compiling the Data

There are two primary data sources that we'll be interacting with in order to compile the necessary data to make a GLP-1 academic literature knowledge base.

-

PubMed - a free and open database that contains metadata for 37 million citations and abstracts for biomedical and life sciences literature. For each citation within the database, PubMed will typically have the article's title, author, and abstract. Significantly, an article's presence within PubMed does not guarantee access to its full text, which would provide the richest information for our knowledge base. For that, we'll need to look to our next data source.

-

PubMed Central (PMC) - an article archive that freely hosts about 7 million full-text articles present within PubMed's citation database. This will be where we grab the actual PDF files for processing. Unfortunately, unlike PubMed's database, it does not contain machine-readable citation metadata like author, year, and abstract, meaning we'll need to cross-reference a candidate's PMCID across both data sources to obtain a full picture of it. Another note I'll throw here is about copyright. While I still have access to Georgia Tech's journal subscriptions, there is a massive legal gray area as to whether or not you can perform this type of processing on full-text PDFs that are not freely hosted. This is another reason why I decided to stick with using the PMC open-access article set, as they explicitly have licenses that allow for these types of transformations.

While in my wildest dreams, I would be able to download and embed the entirety of the PMC open-access article set (around 7 million articles), unfortunately, I do not have 15 TBs of storage readily available, so we'll need to whittle down the set of PDF documents that we process in the first place. If you are someone who has worked with the PubMed database before, you'll know the natural way to start this is with Medical Subject Headings (MeSH). MeSH terms are a hierarchical and canonicalized set of vocabulary terms meant to unify the terminology used to describe a topic within a paper. However, since we have access to an article's abstract–thanks to the PubMed database–and our goal is to keep all processing work local, we can be a little bit more fine-grained with our selection process than a MeSH term search (e.g. we can actually perform a full abstract screening!).

Screening Abstracts Efficiently

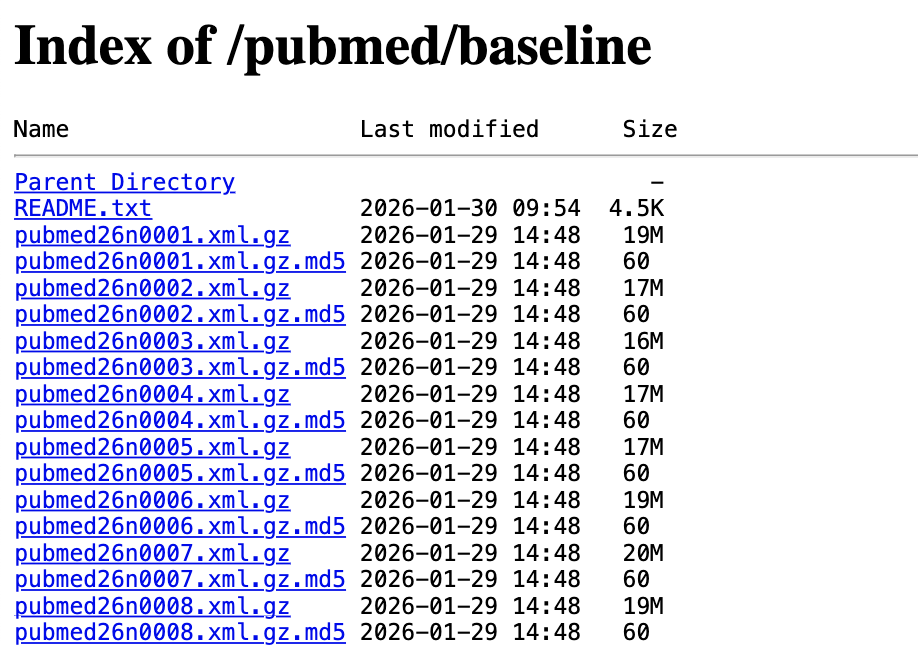

For this step, we first need to download the entirety of PubMed's database locally, so we can screen abstracts on our machine. PubMed publishes a yearly baseline of their database, which is hosted here on their FTP server (FTP lol).

Each .xml.gz file represents a slice of their data in an XML format. Reading one of these files out reveals the following structure…

<PubmedArticle>

<MedlineCitation Status="status" IndexingMethod="method" Owner="owner">

<PMID Version="version">PubMed ID</PMID>

<!-- More fields -->

<Article PubModel="publication model">

<!-- More fields -->

<ArticleTitle>Title of the article</ArticleTitle>

<!-- More fields -->

<Abstract>

<AbstractText Label="section label" NlmCategory="category">

Abstract text content

</AbstractText>

<CopyrightInformation>Copyright information</CopyrightInformation>

</Abstract>

<AuthorList CompleteYN="Y">

<Author ValidYN="Y">

<LastName>Author last name</LastName>

<ForeName>Author first name</ForeName>

<Initials>Author initials</Initials>

<AffiliationInfo>

<Affiliation>Author affiliation</Affiliation>

</AffiliationInfo>

</Author>

</AuthorList>

<!-- More fields -->

<ArticleDate DateType="type">

<Year>Article year</Year>

<Month>Article month</Month>

<Day>Article day</Day>

</ArticleDate>

</Article>

<!-- More fields -->

</MedlineCitation>

<!-- More fields -->

</PubmedArticle>While quite detailed, the structure of individual XML PubmedArticle objects are deeply nested, meaning we'll need to create a quick script that can take a stream of XML objects and output normalized JSON objects with only the fields that we actually care about. In addition to this transformation, we'll need to make sure we only grab PubMed abstracts that are actually present within the PMC open access set. Fortunately, PMC hosts csv files that contain this information. We can use this list of about 7 million articles as an additional input to our script as another pre-screening step. I created a quick script called pmc_json_gen.py and ran it like so:

uv run pmc_json_gen.py \

--input-dir ~/pubmed/baseline \ # the folder containing the PubMed baselines

--output-dir ~/jsonl \ # the jsonl output folder

--pmcid-list ~/pmc/pmcids.txt # the text file containing the list of PMC idsFor each input XML object, the script produces a JSON object on a single line with the below schema:

{

"pmid": "PubMed ID",

"pmcid": "PubMed Central ID",

"title": "Title of the article",

"abstract": "Abstract text content summarizing the study"

}The transformation script produced 6,312,726 title abstract entries normalized and ready for scanning.

Designing the Screening Method

From here we're finally ready to actually design our abstract screening step. Initially, my plan was to do this via a locally hosted Qwen 3.5 0.8B model with the following prompt:

You are screening biomedical papers for inclusion in a GLP-1 literature library used in a retrieval system.

Your goal is to determine whether the paper should be included in the library.

Library scope:

- Papers about GLP-1 receptor agonists or closely related incretin-based therapies relevant to the GLP-1 ecosystem

- Topics of interest include obesity, overweight, type 2 diabetes, cardiometabolic disease, cardiovascular outcomes, kidney/metabolic outcomes, side effects, tolerability, comparative efficacy, mechanisms relevant to therapeutic use, and major reviews/meta-analyses

- Human clinical studies are highest priority

- High-value reviews, meta-analyses, guidelines, and translational papers are also useful

- Papers about tirzepatide may be included because they are highly relevant to the modern GLP-1 treatment landscape, even though tirzepatide is a dual GIP/GLP-1 agonist

Exclude:

- Papers where GLP-1 or related drugs are only mentioned in passing

- Purely basic science papers with no meaningful therapeutic, translational, or clinical relevance

- Animal-only studies, unless they are especially important for understanding a major therapy used in humans

- Papers unrelated to metabolic, obesity, diabetes, or cardiometabolic therapeutic use

- Editorials, letters, or news items without substantive evidence or synthesis

Given the title and abstract, decide:

1. Include or exclude

2. Brief reason

3. Confidence from 0 to 1

4. Primary topic labels

5. Drug names mentioned

6. Study type

7. Population type: human, animal, in vitro, mixed, unclear

Return valid JSON with this schema:

{

"include": true or false,

"confidence": number,

"reason": string,

"primary_topics": [string],

"drug_entities": [string],

"study_type": string,

"population_type": string,

"exclude_reason": string or null

}

While this prompt produces reasonable decisions, and would most likely allow us to whittle down our full article set with good accuracy, unfortunately running this with even Qwen 0.8B locally via Ollama takes up to a minute per abstract. This is due to the fact that the thinking behavior of the model is pre-baked in. Meaning that, instead of just generating an answer immediately, it will first reason extensively about it's decision. While this typically produces more accurate answers, at our scale, this makes it a non-starter. Of course, I'm sure, depending on model choice and careful prompting, I could reduce the total execution time per screened abstract. However, even if I was able to reduce the screening time to just one second, quick napkin math would reveal that screening abstracts in this manner would take…

6,312,726 abstracts = 6,312,726 seconds = ~73 days

While I have been dragging my feet on getting this blog post out, I'd certainly like to get it out this month 😅 So, instead, we'll need to find a faster but still reasonably accurate way to screen articles. For this, we'll turn to regex. Regex allows us to flexibly match a large set of patterns and terms that we suspect may be related to GLP-1 drugs. It allows us to cast a large net and, more importantly, do so efficiently.

Much like how the constant availability of Google Maps has destroyed any hope I have of being able to drive without a GPS system, the release of ChatGPT and other helpful LLMs has dashed any of my hopes of truly learning the intricacies of regex syntax. So, I cannot in good faith say this regex pattern was exclusively designed by me. Luckily, it is fairly simple, so let's walk through the gist of it together.

\b(glp[\s-]?1

|glucagon[\s-]+like[\s-]+peptide[\s-]?1

|glp[\s-]?1[\s-]+receptor[\s-]+agonist[s]?

|glucagon[\s-]+like[\s-]+peptide[\s-]?1[\s-]+receptor[\s-]+agonist[s]?

|incretin(?:[\s-]+based)?

|incretin[\s-]+mimetic[s]?

|semaglutide|liraglutide|dulaglutide|exenatide|lixisenatide

|albiglutide|efpeglenatide|tirzepatide|retatrutide|cotadutide

|survodutide|mazdutide|orforglipron|danuglipron

|beinaglutide|loxenatide)\b

The high-level goal of this pattern is straightforward: check if any of the following GLP-1-related terms are present within the abstract. Each sub-pattern separated by the | character within the parentheses represents a term we are searching for along with variations, if applicable. For example, within the pattern:

glp[\s-]?1

The [\s-]? syntax represents a list of optional characters that could appear at this position. Expanding this out, this means we would match any of the following variations:

glp1 >> no additional characters

glp 1 >> the '\s' character (e.g. a space!)

glp-1 >> a dash character

For each term, you'll see the regex pattern define possible variations in this manner.

Executing the Screen

With this pattern in hand, we can now finally produce the list of GLP-1-related articles whittled down from the 6 million that are available to us for processing. Luckily, because we spent time nicely formatting our abstracts into jsonl files, we can just pipe this file directly into rg (ripgrep, a fast grep alternative) and then into an output file.

cat ~/pubmed_title_abstract.jsonl \

| rg -i -P '\b(glp[\s-]?1|glucagon[\s-]+like[\s-]+peptide[\s-]?1|glp[\s-]?1[\s-]+receptor[\s-]+agonist[s]?|glucagon[\s-]+like[\s-]+peptide[\s-]?1[\s-]+receptor[\s-]+agonist[s]?|incretin(?:[\s-]+based)?|incretin[\s-]+mimetic[s]?|semaglutide|liraglutide|dulaglutide|exenatide|lixisenatide|albiglutide|efpeglenatide|tirzepatide|retatrutide|cotadutide|survodutide|mazdutide|orforglipron|danuglipron|beinaglutide|loxenatide)\b' \

> glp_1.jsonlFortunately, this scan completes in about 100 seconds, a far cry from the 73 days that it would have taken to screen via an LLM. In total, we're left with 10,892 articles to actually process for our knowledge base.

A quick post-mortem note: for those reading more closely, you'll catch that the command above is technically an abstract and title screen, as we do not do any pre-processing of the JSON object yielded by the cat command. So effectively, we're scanning the entire JSON object rather than just the abstract. On a whim, I went back and added a jq ".abstract" processing pipe step which would only pass the abstract to the regex pattern. This did have a performance impact, essentially doubling the processing time, and produced… huh… 104 fewer candidate articles. While I would be interested to go investigate these edge cases, we'll leave this as a future rabbit hole if I decide to make this process more methodologically rigorous.

Fetching Full-Text Articles

Now that we have a curated list of PMC IDs, we can use these to access our next data source, the PMC open access archive. In practice, the archive is hosted on AWS in an S3 bucket and can be accessed through the AWS CLI. Our output JSON contains the id alongside the title and abstract, so we can attempt to download the full PDF set via another piped command that parallelizes the download processes and shoves them in a folder.

cat glp_1.jsonl | jq -r '.pmcid' | \

xargs -P 32 -n 1 sh -c '

aws s3 cp --only-show-errors --no-sign-request \

"s3://pmc-oa-opendata/$1.1/$1.1.pdf" \

"pmc_pdfs/$1.1.pdf" >/dev/null 2>&1

' _10,558 downloads completed successfully, landing at around 22 GB of PDFs on disk. Not too bad.

Processing Full-Text Articles

We are now well and truly ready to begin our processing. To do this, let's quickly discuss how retrieval augmented generation (RAG) systems work and the internals of LitLake, which will influence the approach we take for processing these documents.

So What the Heck is RAG?

As articulated by the thousands of LLM-generated write-ups on RAG systems that continue to clutter my LinkedIn algorithm, the core concept of RAG is that an LLM will have a much easier time answering a user's questions accurately when it is provided additional context that contains the answer to said question.

For example, let's say you ask an LLM the following question:

How do I release the steering lock on a 2021 Toyota Prius?

Running this prompt with the model claude-3-haiku-20240307, you receive the following response:

Here are the typical steps to release the steering lock on a 2021 Toyota Prius:

- Make sure the vehicle is in the "OFF" position. The steering lock engages when the ignition is turned off.

- Apply firm pressure to the steering wheel while turning the key in the ignition. This helps overcome the steering lock mechanism.

- Turn the key to the "ACC" or "ON" position. This should disengage the steering lock.

- If the steering wheel still feels locked, you may need to gently rock the steering wheel back and forth while turning the key. This can help free up the lock.

- As a last resort, if the steering lock won't disengage, you may need to have the vehicle checked by a Toyota dealership or mechanic, as there could be an issue with the lock mechanism.

The key is to apply pressure to the steering wheel while turning the key. Go slowly and don't force it, as you don't want to damage the lock. If it doesn't release, have a professional take a look.

This is blatantly incorrect information. The 2021 Toyota Prius does not even have a key-based ignition system; it's push-to-start. Luckily for us, Toyota posted an owner's guide that has a page of information on how to do just this thing. Taking this page, converting it into markdown, and providing it as additional context produces the following prompt:

<context>

## FEATURES & OPERATIONS

---

### Tilt & Telescopic Steering Wheel

To ensure a comfortable driving position, you can adjust both the height and

the distance of the steering wheel.

* **Adjustment Lever**: A lock release lever is located on the steering column.

* **How to Adjust**:

1. Hold the steering wheel firmly.

2. Push the **lock release lever** down.

3. Adjust the steering wheel to your preferred **angle** and **length**.

4. Return the lever to its original position to secure the wheel.

* > **NOTE:** Do not attempt to adjust the steering wheel while the vehicle

is in motion.

---

### Steering Lock Release

If the steering wheel remains locked when you try to start the vehicle,

follow these steps:

1. Look for a flashing green indicator light on the **POWER** switch.

2. Check the multi-information display for a guiding message.

3. **To Release**: Push the engine switch while gently **turning** the

steering wheel to the left and right.

---

### Parking Brake

The parking brake is operated via a foot pedal located to the left of the

brake pedal.

* **To Set**: Depress the pedal fully.

* **To Release**: Depress the pedal a second time.

* When engaged, the **PARK** indicator will light up on the instrument panel.

---

12 E

</context>

How do I release the steering lock on a 2021 Toyota Prius?

Now running this, we get a much more accurate response from claude-3-haiku-20240307:

Based on the context provided, to release the steering lock on a 2021 Toyota Prius, you should follow these steps:

- Look for a flashing green indicator light on the POWER switch.

- Check the multi-information display for a guiding message.

- To release the steering lock, push the engine switch while gently turning the steering wheel to the left and right.

The key points are:

- Look for a flashing green indicator light on the POWER switch, which indicates the steering is locked.

- Check the multi-information display for any guidance on how to release the steering lock.

- To actually release it, push the engine switch while gently turning the steering wheel left and right.

This should help you unlock the steering column if it remains locked when you try to start the vehicle.

While it may seem quite intuitive that literally providing the correct answer within the prompt itself would improve the accuracy of the model's response, we did all of the legwork for actually finding the needed page of context ourselves. And of course, the context that we provided is only relevant to questions that directly reference this information. As soon as our user asks about anything that falls outside the scope of this single owner's manual page, we're back to square one.

The tricky part is providing the correct context to the LLM dynamically and without human intervention. To do this, we need to talk about embeddings.

What Are Embeddings, and How Are They Used?

While embeddings can be used for many things, in this context, they are a vector representation of the semantic meaning of blocks of text. If you'd like to know more, please read here.

Within RAG systems, chunks of documents–like the pages of the Toyota owner's manual–are typically indexed via pre-computed embeddings. When a user asks a question, it's also turned into an embedding. Then, the geometric similarity is computed between the question's vector and all document vectors. From there, the top n most similar documents are selected and provided to the LLM as context for its answer.

For LitLake, I took an opinionated approach, trying to simplify this process for on-device use cases. It's based on SQLite and uses the sqlite-vec extension to power its embedding search. Embeddings are generated via BAAI/bge-small-en-v1.5, which produces 384-dimensional vectors for each chunk of text. Apart from this, LitLake maintains a lightweight reference manager in order to map PDFs to references. What results is a headless, file-based library of sorts that has pre-computed semantic indexes for RAG workflows (more on the fun things you can do with this in a later).

For each full-text article that is inserted into a LitLake database, the following information is required:

- Id

- Title

- Author

- Publication Year

- The full-text PDF

Perfect! That's exactly the information I extracted in our JSON file… oop… nope, I forgot to extract the author and publication year from the original PubMed baseline. After a quick refactoring of our extraction script, we have our input prepared and can now insert references into our database. To do this, I created a single litlake.py script that handles processing a PDF end-to-end. Accessible to the CLI, a single upsert into a LitLake db looks like this:

uv run litlake.py upsert \

--db '/path/to/your/litlake.db' \

--source-system 'pmc' \

--source-id 'PMCXXXXXX' \

--title 'This is the Title' \

--authors 'Comma, Separated, Authors' \

--year 'YYYY' \

--abstract 'Abstract Text' \

--pdf '/path/to/your/article.pdf'Now, we just need to run this command for all 10,892 articles that passed our screening. Right?

PDFs Are Monsters in File Form

No! Unfortunately, the fact that we must accept PDFs as our full-text artifacts means that we have to deal with… well PDFs. The crux of the issue is that in order to create a semantic embedding, one must use plain text. And, PDFs are not plain text; instead, they are damn near a programming language unto themselves with a 756-page specification to boot. All this is to say, extracting a semantically faithful plain text version of a PDF is non-trivial and a huge headache. Good thing we can let someone else solve the issue!

Docling - The Best Darn Open-Source Document Processor in Town

While there are many approaches to this, which I may cover in another blog post, I'll only quickly cover Docling's approach for now. Extracting some sort of text from a PDF actually is not too hard. Even though the PDF specification is quite bloated, there are many libraries that allow you to extract single words or lines along with their bounding boxes. If this is not possible programmatically, you can always fall back to optical character recognition (OCR) models. These have been around for a while and can reliably extract text present within a picture. For each page, you would render the PDF as a picture then use OCR to extract the text on the page. Similarly to programmatic text extraction, they can produce a bounding box and a plain text "guess" of what word is within the bounding box. Tesseract is a JS-based OCR library that we can use to demonstrate this. Take the image below:

Tesseract OCR demo converting an image of the word "Hello!" into text with a bounding box

Tesseract OCR demo converting an image of the word "Hello!" into text with a bounding box

Using their online demo, this image can be easily converted into the string "Hello!". The red box drawn on top of the image represents the word's bounding box, essentially, a visual representation of the program's understanding of where the text actually appears within the image. While with single words, this approach produces clean text, thing get much messier as we begin to introduce more complex layouts; take the multi-column newspaper article below:

Multi-column newspaper article run through Tesseract's OCR demo with bounding boxes and annotated green arrows showing naive left-to-right reading order

Multi-column newspaper article run through Tesseract's OCR demo with bounding boxes and annotated green arrows showing naive left-to-right reading order

Once run through Tesseract's online demo, it produces jumbled paragraphs of text. Here's the exact output for the snippet highlighted in the picture above:

News Michael Delgado is who he has

always been: A guy that smiles and —

News Briefs............Page 2 | doesn't let the little things stand in the way.

We know that it's correctly identified where and what each word is–since it's drawn the bounding boxes correctly. However, when it comes to actually ordering the words in an intuitive reading order, it seems to be using a naive left-to-right reading approach that completely ignores the column format. I marked this with the green arrows. By no means is this Tesseract's fault; it's doing its job. How is it supposed to know that we as humans would naturally read this layout in a completely different way? This is what Docling and other similar PDF processing solutions bring to the table. They have developed what are called reading order models that predict the order in which text would be read by a human based on texts' bounding boxes and other factors. In this way, they can take the raw output received from these naive programmatic text extraction methods or OCR models and order the text to be more readable. An example of a possible output from one of these models:

News

News Briefs............Page 2 |

Michael Delgado is who he has always been: A guy that smiles and —

doesn't let the little things stand in the way.

This is, candidly, a gross over-simplification of what Docling does, and I'm sure it's not wholly accurate. I would encourage the mindful reader to go investigate the code themselves. But for our purposes, it suffices to say that Docling uses a confluence of models to reliably extract clean text from PDFs and represent it in a structured format called a DoclingDocument. Within the litlake.py script, we then use this object to serialize the PDF as markdown. This is what we actually then break down and embed for our semantic index.

The Final Product

Now that we understand all of the parts at play here, it's finally time to launch our processing workload that will convert our 10,892 PDFs into a semantically searchable database. For fun, here's a picture of what it actually ran on.

Elliot's magnificent PC workhorse, littered with cat toys, an old laptop hard drive, and a rotary phone

Elliot's magnificent PC workhorse, littered with cat toys, an old laptop hard drive, and a rotary phone

Unfortunately, I do not have $2K burning a hole in my pocket for a GTX 5090; instead, we are left with around 16 GB of RAM on my AMD Ryzen 5 3600 6-Core Processor and a whopping 6 GB of VRAM on my NVIDIA GeForce GTX 1660. But, while the processing power may be lackluster, we have a lot of time; after kicking off the workload, it took just around 18 hours for the processing to complete. I heard it wheezing the entire time as I ate dinner and breakfast. I suspect the batch processing that I implemented was quite inefficient, as I don't think I configured the Docling PDF parsing pipeline correctly in order to take advantage of all the optimizations available to me. I swear I could hear the PC's fan spinning faster every time Docling reloaded its OCR model back into memory. Nonetheless, the processing completed, and what was left was a single 3.6 GB file, which, for all intents and purposes, is just an SQLite file.

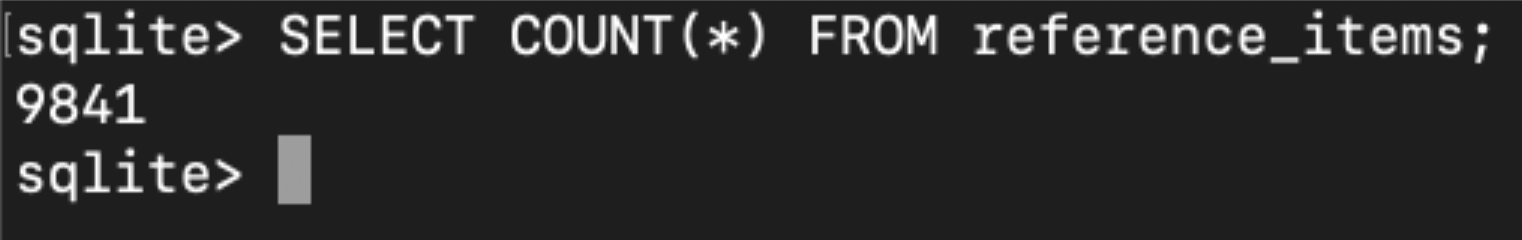

Kicking the Tires

Poking around via the interactive SQLite3 CLI, we can see that only 9,841 actually ended up being upserted. There's a leak in my pipeline somewhere, which I suspect is due to some sort of failed processing step that causes us to skip inserting the article, but yet again, this is a rabbit hole for another time.

SQLite3 CLI showing reference count query result

SQLite3 CLI showing reference count query result

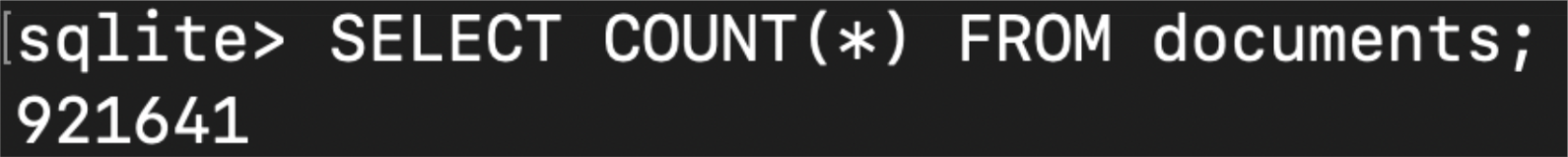

Counting all the document chunks that were actually embedded, we can see that we have a total of around 900,000 document chunks that we actually have embeddings for.

SQLite3 CLI showing document chunk count query result

SQLite3 CLI showing document chunk count query result

Using the search command in the litlake.py CLI, we can actually make use of our semantic index. It will embed our search query, then compute the geometric similarity of all documents in our database, returning structured lines of JSON that we can parse further.

uv run litlake.py search --db glp1.db "clinical trials of type 2 diabetes" -k 5{"doc_id": 817498, "reference_id": 8804, "kind": "title", "distance": 0.11225511133670807, "content": "Registered clinical trials targeting type 2 diabetes remission with pharmacological interventions."}

{"doc_id": 198087, "reference_id": 2505, "kind": "title", "distance": 0.12961117923259735, "content": "Clinical implications of cardiovascular outcome trials in type 2 diabetes."}

{"doc_id": 107555, "reference_id": 1414, "kind": "title", "distance": 0.13375326991081238, "content": "Continued efforts to translate diabetes cardiovascular outcome trials into clinical practice."}

{"doc_id": 440656, "reference_id": 5059, "kind": "title", "distance": 0.13631588220596313, "content": "Cardiovascular outcomes trials: a paradigm shift in the current management of type 2 diabetes."}

{"doc_id": 560891, "reference_id": 6241, "kind": "title", "distance": 0.15254369378089905, "content": "Antidiabetic Molecule Efficacy in Patients with Type 2 Diabetes Mellitus-A Real-Life Clinical Practice Study."}As you can see, we're given a structured output that contains metadata about the chunk itself and the content. As you can most likely tell, this database does not replace a full RAG workflow; instead, it just allows you to perform the search for relevant articles within your library via the terminal. The really fun part is then hooking this up to an agent that can execute terminal commands. It can then be an assistant for you, interacting with your library via the terminal for long-running research tasks.

A Plug and Play Research Agent

Quickly firing up Codex, OpenAI's terminal-based coding agent, allows us to have the agent interact with the database independently. Just for fun, I launched the following prompt on Codex 5.3 extra-high:

I would like you to use this database (feel free to interact with it via the

sqlite3 cli too) and the litlake.py script to deeply research the following

question: "What are the most prevalent harmful side effects of GLP-1 drugs

for non-diabetic users? Do these differ from those that are diabetic?" ONLY

base your answer on information you retrieve from the database.

Admittedly, this is quite a bad prompt, and I'm sure, if I ran this prompt 10 different times, I would get 10 different approaches and answer variations. In a future blog post, I'll write a proper harness for an agent to interact with databases in this manner. However, three weekends into this blog post, I'm just interested in understanding qualitatively what it would do.

Initially, the model did a fair amount of work that would have been moot if I had provided it the right context (e.g., investigating schemas, counting references, etc.), from there it created several scripts to screen references programmatically via keyword, and finally, after around 12 minutes of work, it yielded the following result:

Database-only conclusion: the most prevalent harmful side effects for non-diabetic GLP-1 users are gastrointestinal, especially nausea, vomiting, and diarrhea. Compared with diabetic users, GI side effects appear proportionally more prominent in non-diabetic cohorts, while hypoglycemia is much more prominent in diabetic cohorts.

I analyzed

glp1.dbdirectly with cohort keywording on title+abstract (non-diabeticGLP-1 refsn=375;diabeticGLP-1 refsn=3869) and adverse-event-context FTS queries across indexed text.

Side effect (AE-context, abstract-level) Non-diabetic (n=375) Diabetic (n=3869) Nausea 19 (5.07%) 155 (4.01%) Vomiting 15 (4.00%) 85 (2.20%) Diarrhea 12 (3.20%) 70 (1.81%) Constipation 5 (1.33%) 20 (0.52%) Hypoglycemia 5 (1.33%) 353 (9.12%) Pancreatitis 5 (1.33%) 46 (1.19%) Gallbladder/biliary 4 (1.07%) 23 (0.59%) Supporting evidence from records in the DB:

ref 8499 (2025): in obese adults without diabetes, overall GI AEs were higher vs placebo (RR 1.86); tirzepatide RR 2.94, semaglutide RR 1.68; semaglutide increased cholelithiasis risk.ref 8954 (2025): in overweight/obese adults without diabetes, tirzepatide increased nausea (RR 3.11), vomiting (RR 5.94), diarrhea (RR 2.92), constipation (RR 2.85), and discontinuation due to AEs (RR 2.29).ref 5495 (2022): semaglutide in obesity without diabetes increased GI AEs (RR 1.59), discontinuation (RR 2.19), and serious AEs (RR 1.60), with serious GI/hepatobiliary events noted.ref 6670 (2024): in with-vs-without-T2D tirzepatide review, AEs were mainly GI; nausea/diarrhea were lower in T2D than non-T2D; vomiting similar; discontinuation due AEs ~3–7% without major between-group difference.ref 134 (2010)andref 3167 (2020)(T2D-focused): GI symptoms were the most common AEs; hypoglycemia was generally low with GLP-1 therapy alone and rose mainly with sulfonylurea/insulin co-therapy.Direct answer to your question:

- Most prevalent harmful side effects for non-diabetic users: nausea, vomiting, diarrhea (then constipation), with smaller but present biliary/pancreatic safety signals.

- Do they differ from diabetic users? Yes. GI effects are the dominant signal in both groups, but relatively more prominent in non-diabetic cohorts; hypoglycemia is the main side-effect signal that is substantially more prominent in diabetic cohorts.

I have several serious criticisms with this response, including the absolute lack of auditability yielded by Codex, but generally, it was quite fun to watch this thing reason and design its own mini-screening process. But, I strongly suspect we can do much, much better with the right harness.

If you're interested in poking around in the file yourself, check it out here! You can grab the litlake.py script here.

Great! That's it. See ya in the next one,

Elliot